---

title: Nonlinear Modelling using nls, nlme and brms

author: Granville Matheson

date: '2020-03-28'

slug: nonlinear-modelling-using-nls-nlme-and-brms

categories:

- Blog

tags:

- Nonlinear

- Modelling

- Statistics

- R

- Day job

- Bayesian

- Pharmacokinetic Modelling

subtitle: "a.k.a. When straight lines don't provide enough of a thrill any longer"

description: 'A brief demonstration to grok the syntax for fitting the same model using nonlinear least squares, and frequentist and Bayesian multilevel modelling'

image: "/images/intervals.png"

---

# Background

A while back, I was messing about with a simple pharmacological model called the Hill function in order to figure out the syntax for nonlinear modelling using several different tools in R. The exercise was helpful for me, and I've gone back to it several times to grab the syntax for new applications, so I thought I'd write it up for others (and also so I don't have to go and find it each time on my own computer..).

```{r}

#remotes::install_github("mathesong/kinfitr")

library(tidyverse)

library(kinfitr)

library(nls.multstart)

library(nlme)

library(brms)

library(hrbrthemes)

library(broom)

library(viridis)

colourcodes <- c("#d4a665", "#d27fff", "#7fd9ff")

colourpal <- c(NLS="#d4a665", NLME="#d27fff", MCMC="#7fd9ff")

theme_set(hrbrthemes::theme_ipsum_rc())

```

# The Application, the Model and the Data

### Application

In my field of PET pharmacokinetic modelling, we need to correct a set of measurements of blood radioactivity for several factors, which are themselves curves which are measured over time (more about that [here](https://www.granvillematheson.com/post/pharmacokinetic-modelling-of-pet-data-in-r-using-kinfitr-part-4-blood-processing/)). It's worth noting that these measurements can be made with a sometimes substantial degree of error: there can be mistakes made, or failures of the apparatus.

### Model

The Hill Function is one of the models we use for modelling the remaining proportion of the tracer which is not metabolised. This is called the parent fraction.

### Data

The data I'll use here comes from a dataset of [$^{11}$C]PBR28 data. The data can be found in the [kinfitr](https://github.com/mathesong/kinfitr) package using the following:

```{r}

data(pbr28)

```

And looking in the Metabolite section of each individual's JSON data. Note that the durations are set to zero, because they were never recorded.

```{r, eval=FALSE}

# Old code - package has changed since this was written

pbr28$json_data[[1]]$Metabolite

```

So, now let's put everything together a little bit more neatly.

```{r}

# Updated code for current kinfitr package version

pfdata <- pbr28 %>%

mutate(pf = map(blooddata, ~bd_extract(.x, "parentFraction"))) %>%

select(PET, Subjname, PETNo, Genotype, pf)

```

# Fitting using nonlinear least squares (NLS)

First, I'll go through how to fit these curves one by one using NLS. This is the most common way that this is currently performed in the field. NLS makes use of gradient descent to find the set of parameters which maximise the likelihood of the data under them. We can analogise this by considering the algorithm hopping around parameter space to find the lowest point (i.e. with the lowest 'cost' - the residual sum of squares). We could do this by exploring a grid of potential parameter values, but this is extremely inefficient, and would only be as fine-grained as our grid was. Instead, the direction and size of the jumps are determined by the gradients of the cost function below its feet, i.e. if it feels that it's on a downhill, it will jump in the direction that takes it down fastest. It will stop jumping when it feels that it is flat underfoot, and that all directions go upwards.

So, gradient descent is more efficient than a grid of locations. However, gradient descent can land in so-called *local minima*. These are positions that are the minimum in their local neighbourhood, but not the lowest point in the whole of parameter space. To get around this problem, and to avoid fitting failures, we can fit the model multiple times, starting in different locations, and choose the one with the best fit: that's what the [nls.multstart](https://github.com/padpadpadpad/nls.multstart) package does. This package uses the *minpack.lm* package under the hood, which makes use of the Levenberg–Marquardt algorithm, and fits with a set of different starting parameters.

## Fitting a single curve

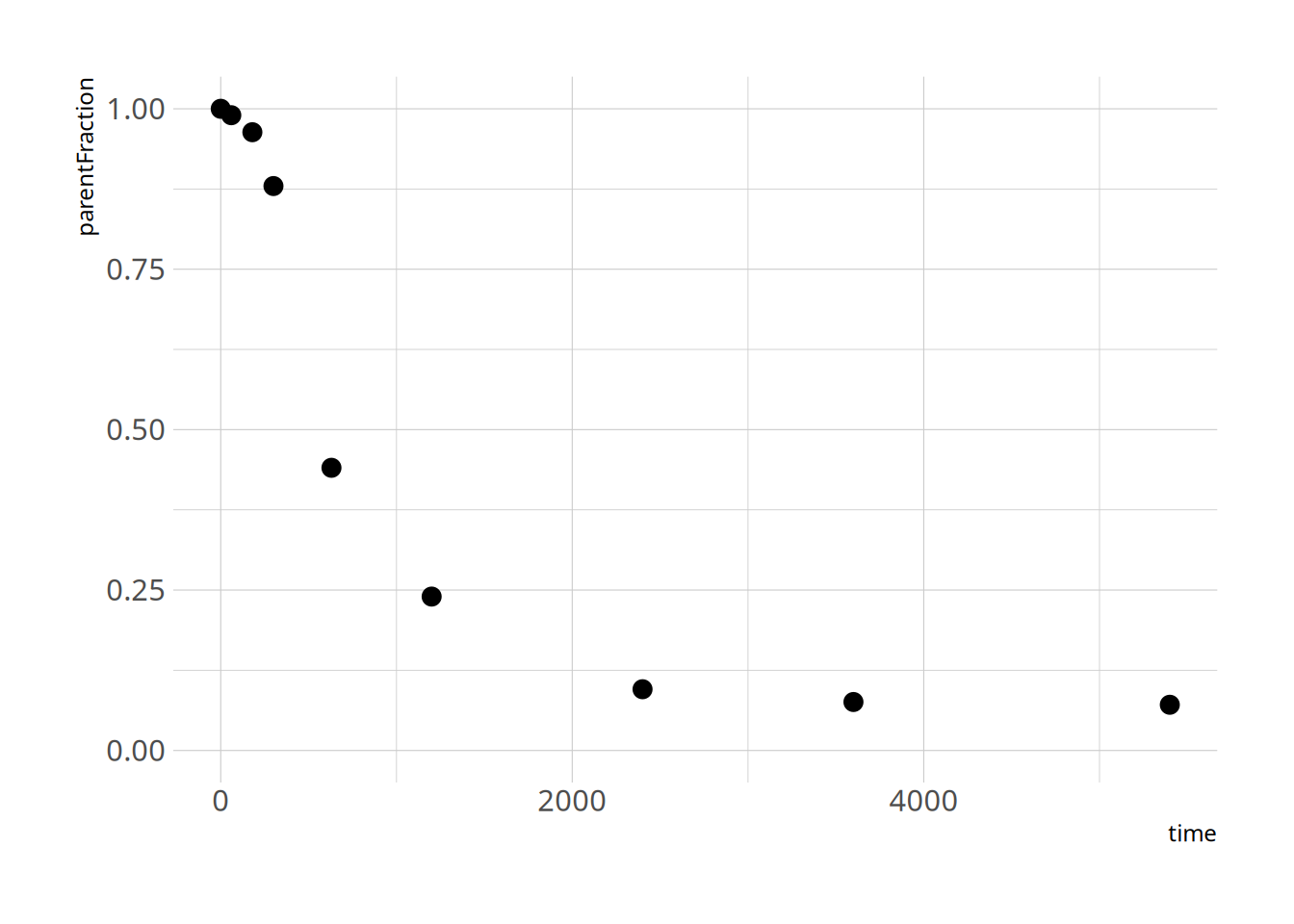

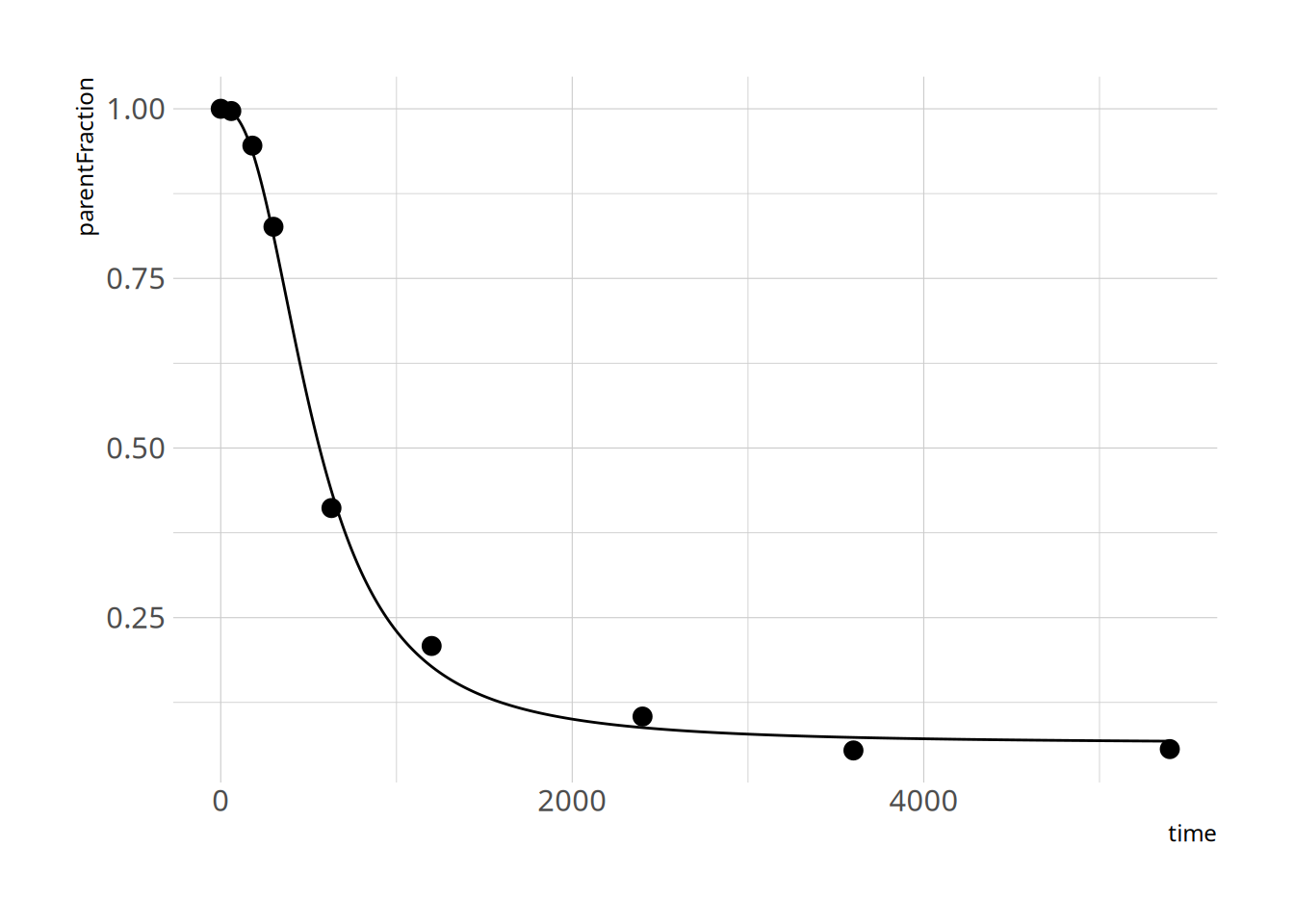

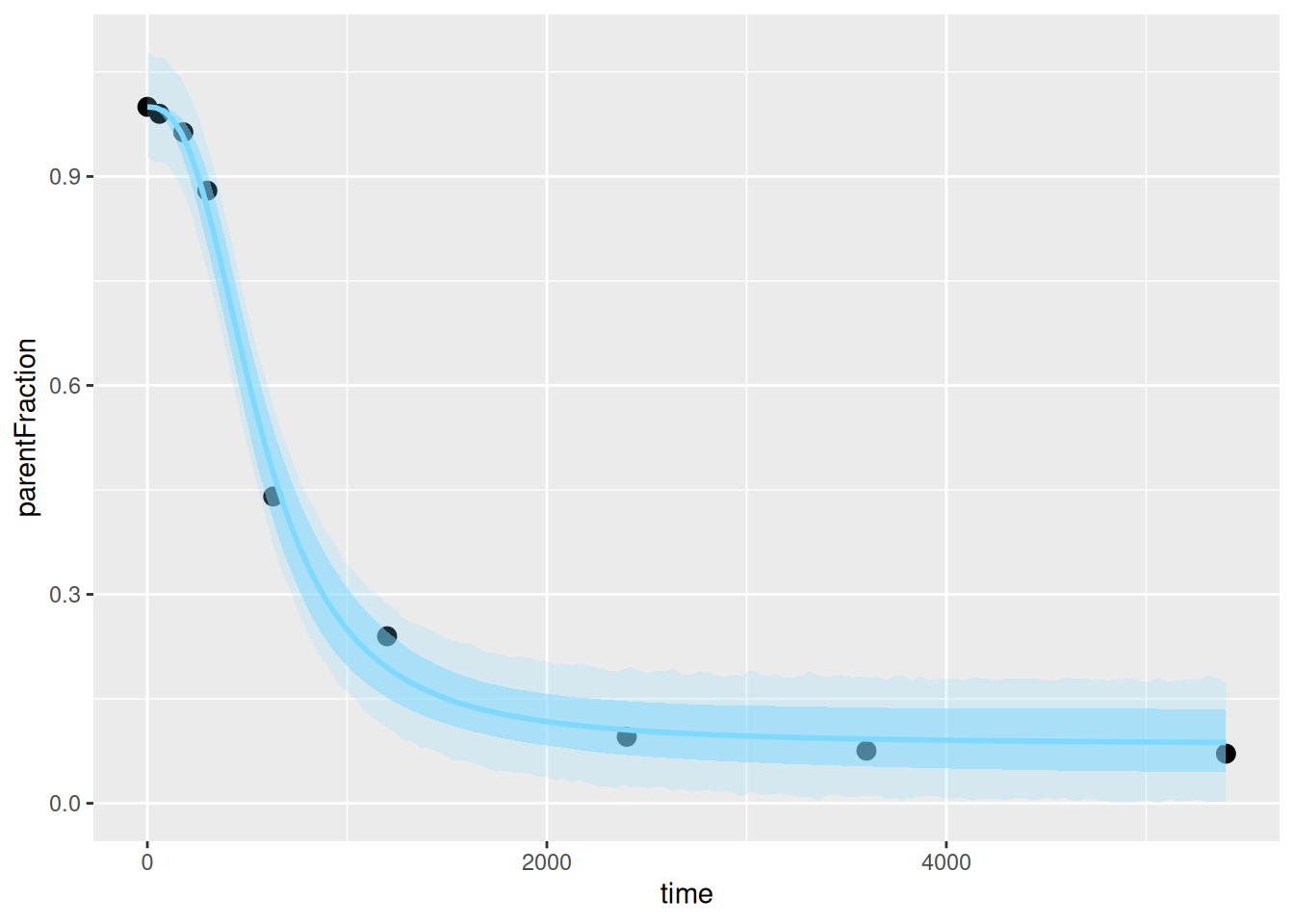

First let's take a look at how the data look

```{r}

pfdat <- pfdata$pf[[1]]

# Check column names to see what bd_extract() returns

names(pfdat)

# Use the correct column names for the plot

ggplot(pfdat, aes(x=time, y=parentFraction)) +

geom_point(size=3) +

ylim(c(0, 1))

```

And let's define the function. Note that *kinfitr* does contain the Hill function, and additionally contains extra optional parameters for if the decrease begins at a time other than 0, or if curve may not start at 1 (`kinfitr::metab_hill()`). But we'll define it ourselves for the purpose of this exercise.

```{r}

hillfunc <- function(Time, a, b, c) {

1 - ( ( (1-a) * Time^b) / ( c + (Time)^b ) )

}

```

... and let's fit it. I'll show off two methods. I'll use the *nls.multstart* package first, which fits the function with different starting parameters so that we don't have to worry too much about not defining good enough starting parameters.

```{r}

hill_nls_fit <- nls.multstart::nls_multstart(parentFraction ~ hillfunc(time, a, b, c),

data = pfdat,

lower=c(a=0, b=1, c=0),

upper=c(a=1, b=Inf, c=1e12),

start_lower = c(a=0, b=1, c=0),

start_upper = c(a=0.5, b=500, c=1e12),

iter = 500,

supp_errors = "Y")

```

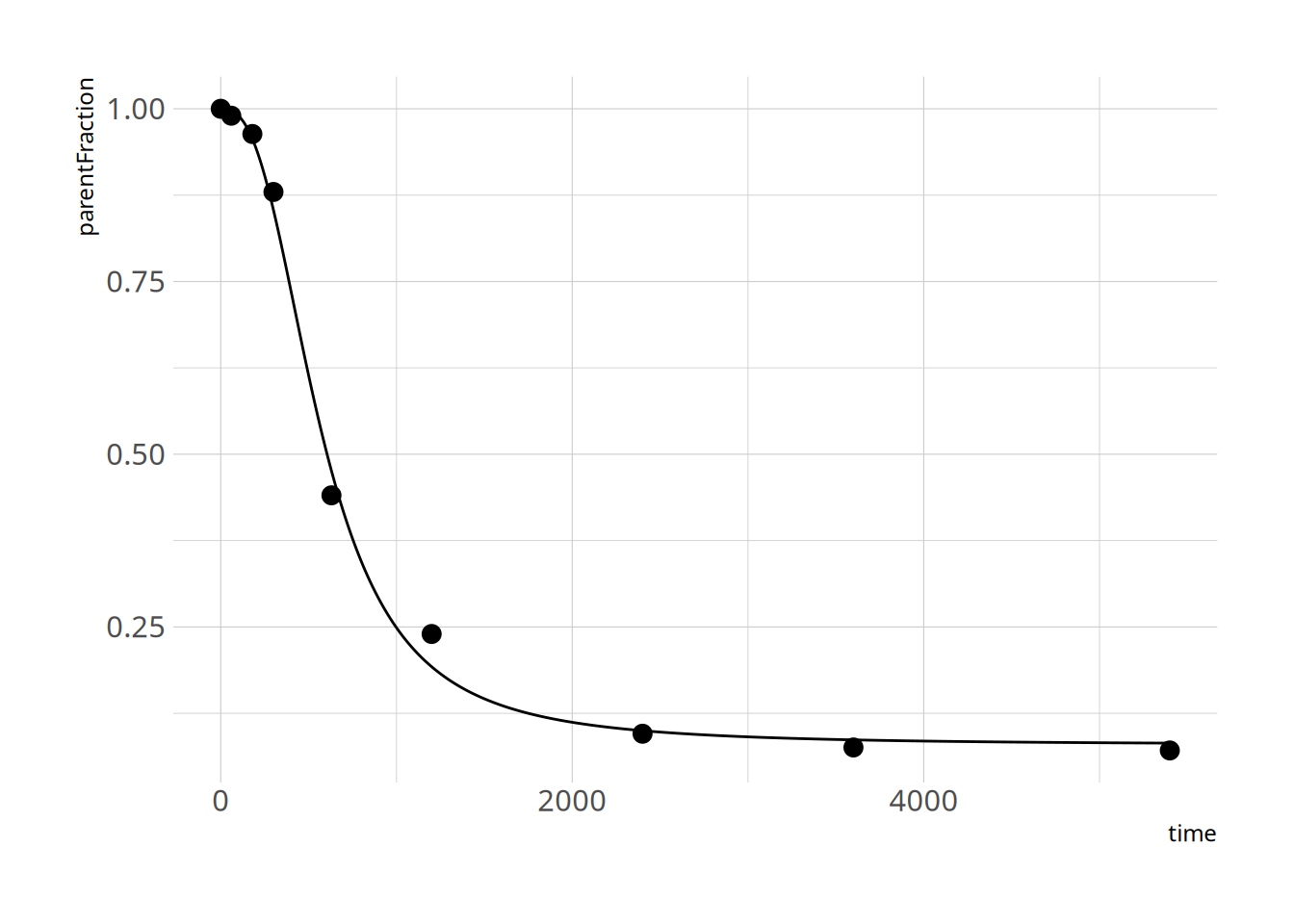

and let's check the fit

```{r}

summary(hill_nls_fit)

plot_nls <- function(nls_object, data) {

predframe <- tibble(time=seq(from=min(data$time), to=max(data$time),

length.out = 1024)) %>%

mutate(ParentFrac = predict(nls_object, newdata = list(time=.$time)))

ggplot(data, aes(x=time, y=parentFraction)) +

geom_point(size=3) +

geom_line(data = predframe, aes(x=time, y=ParentFrac))

}

plot_nls(hill_nls_fit, pfdat)

```

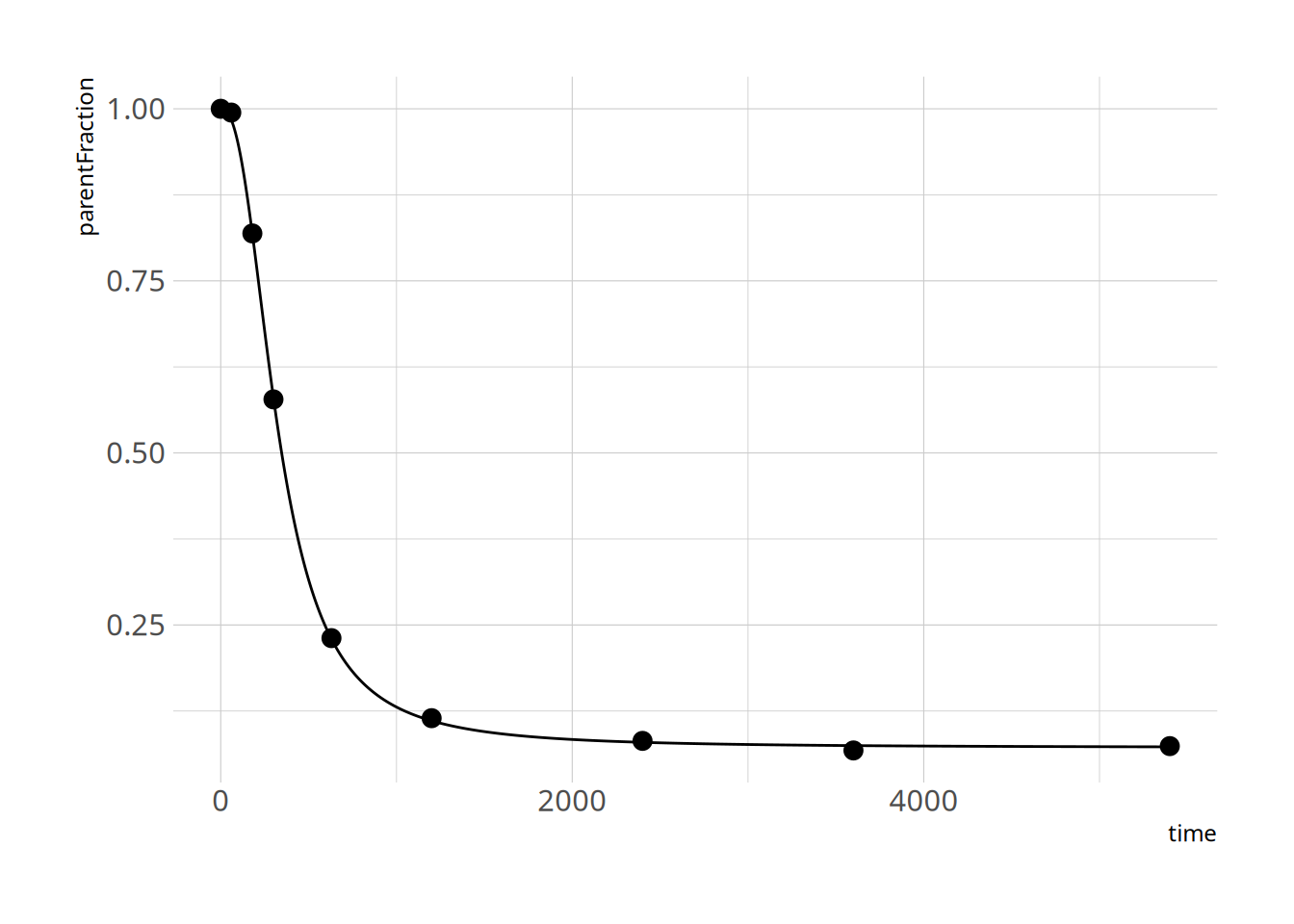

Looks pretty ok! Because the c estimate is so high, we might have some issues with finding it, and with defining a prior for it. I'll just define it on the log scale instead.

Let's just run that again then.

```{r}

hillfunc <- function(Time, a, b, c) {

1 - ( ( (1-a) * Time^b) / ( 10^c + (Time)^b ) )

}

```

... and let's fit it.

```{r}

hill_nls_fit <- nls.multstart::nls_multstart(parentFraction ~ hillfunc(time, a, b, c),

data = pfdat,

lower=c(a=0, b=1, c=0),

upper=c(a=1, b=Inf, c=12),

start_lower = c(a=0, b=1, c=0),

start_upper = c(a=0.5, b=500, c=12),

iter = 500,

supp_errors = "Y")

```

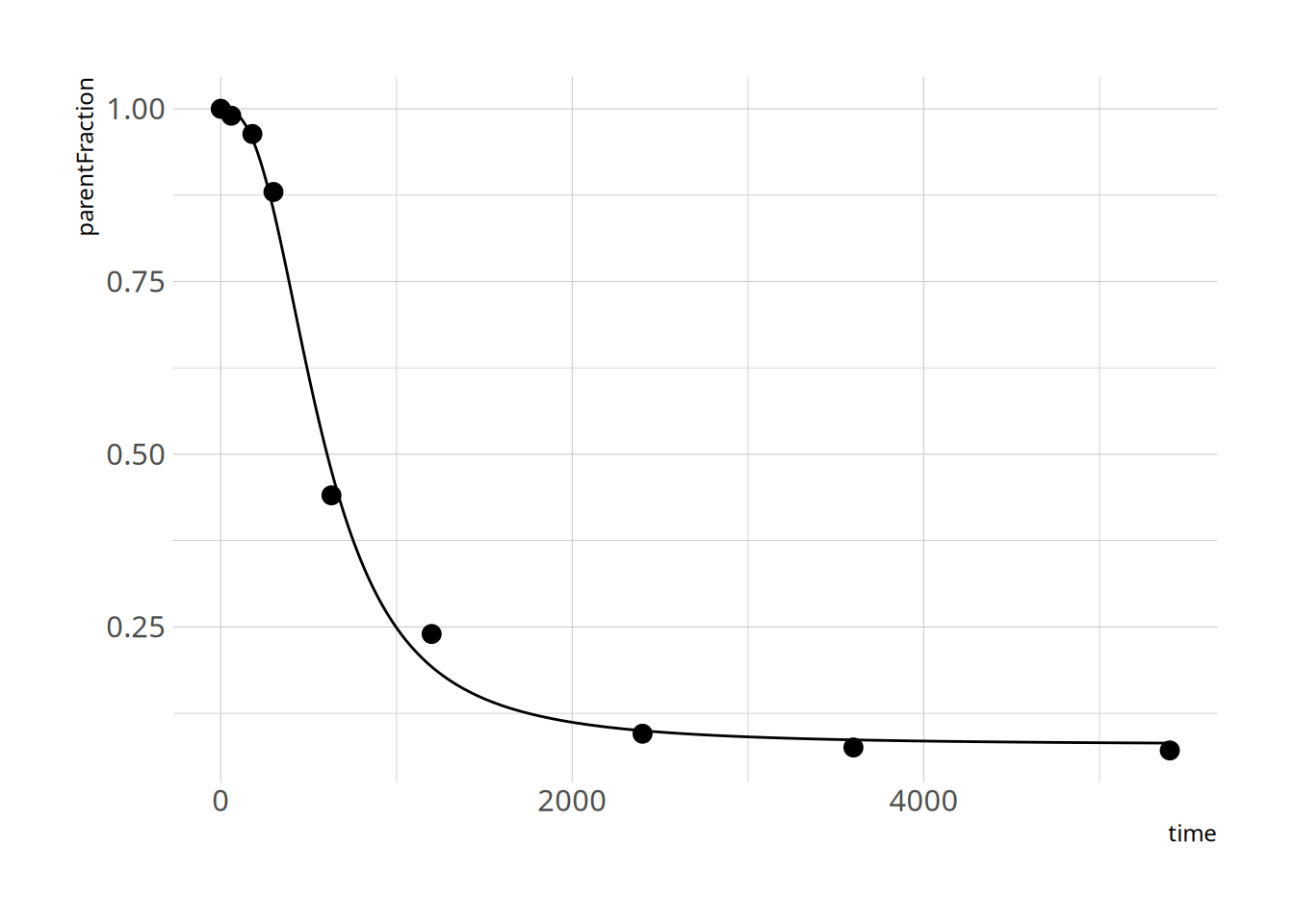

and let's check the fit

```{r}

summary(hill_nls_fit)

plot_nls(hill_nls_fit, pfdat)

```

Great - that seems to work fine!

Another way that we could do things would be to use *minpack.lm*. However, here we have to define our starting parameters more carefully.

If I do as follows, with some thumb-suck starting parameters

```{r, eval=FALSE}

hill_nls_fit2 <- minpack.lm::nlsLM(parentFraction ~ hillfunc(time, a, b, c),

data = pfdat,

lower=c(a=0, b=1, c=0),

upper=c(a=1, b=Inf, c=12),

start=c(a=0.5, b=10, c=2))

```

I get the error

```

Error in nlsModel(formula, mf, start, wts) :

singular gradient matrix at initial parameter estimates

```

This usually either means that our model isn't working correctly (usually an error in our model definition), or that our starting parameters are too far from reasonable ones. In this case, it's the latter. So let's try with some better parameters:

```{r}

hill_nls_fit2 <- minpack.lm::nlsLM(parentFraction ~ hillfunc(time, a, b, c),

data = pfdat,

lower=c(a=0, b=1, c=0),

upper=c(a=1, b=Inf, c=12),

start=c(a=0.5, b=5, c=5))

```

That works! And how do the values compare to our multstart values?

```{r}

coef(hill_nls_fit) # multstart

coef(hill_nls_fit2) # not multstart

```

Basically the same!

## Fitting all the cuves

So, now let's use `purrr` to fit all the curves.

```{r}

hill_nls_fit_func <- function(pf_df) {

nls.multstart::nls_multstart(parentFraction ~ hillfunc(time, a, b, c),

data = pf_df,

lower=c(a=0, b=1, c=0),

upper=c(a=1, b=Inf, c=12),

start_lower = c(a=0, b=1, c=0),

start_upper = c(a=0.5, b=500, c=12),

iter=500,

supp_errors = "Y")

}

pfdata <- pfdata %>%

mutate(hill_nls_fit = map(pf, ~hill_nls_fit_func(.x)))

```

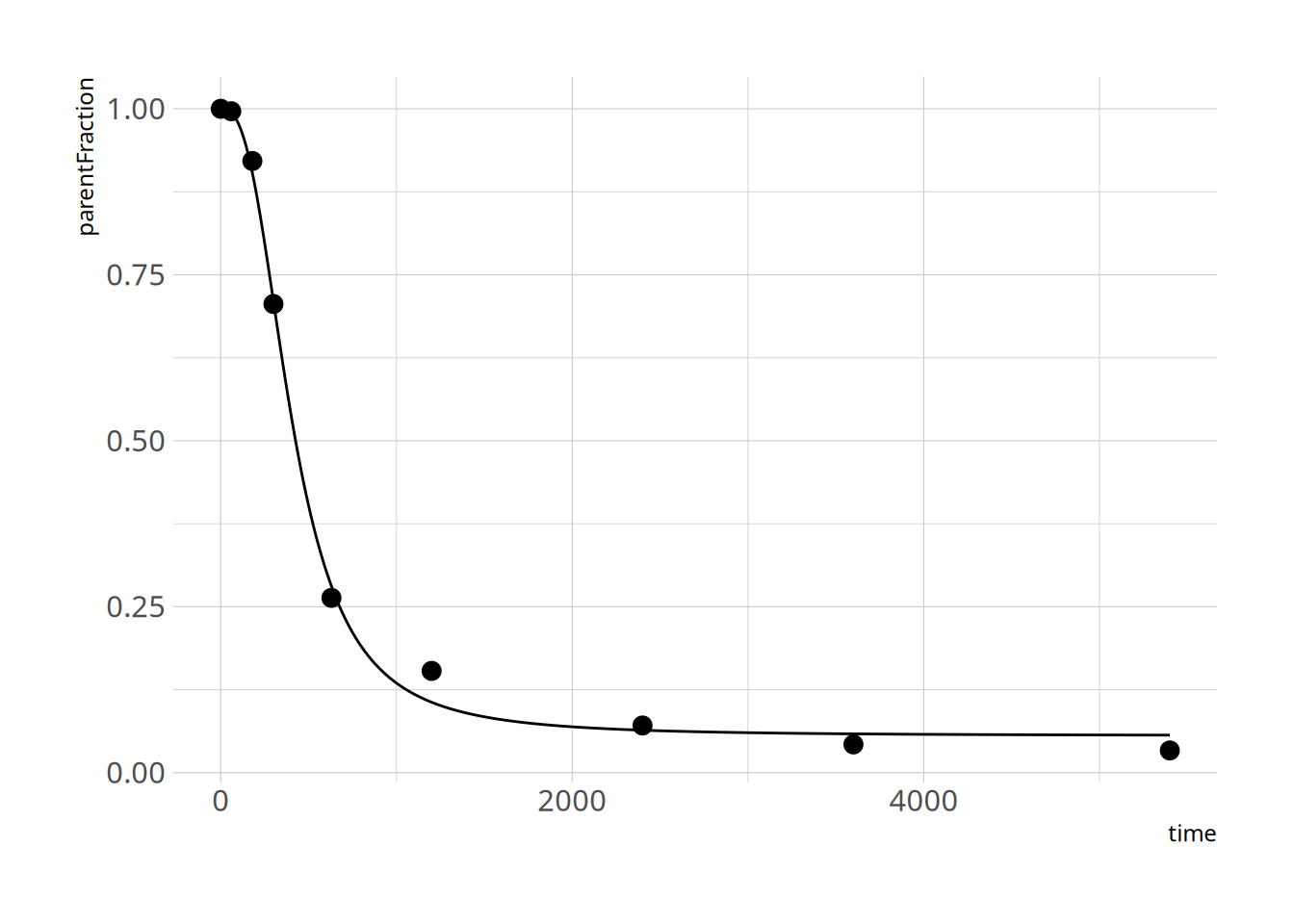

Let's check a couple of fits to make sure things went ok

```{r}

plot_nls( pfdata$hill_nls_fit[[3]], pfdata$pf[[3]])

plot_nls( pfdata$hill_nls_fit[[8]], pfdata$pf[[8]])

plot_nls( pfdata$hill_nls_fit[[12]], pfdata$pf[[12]])

```

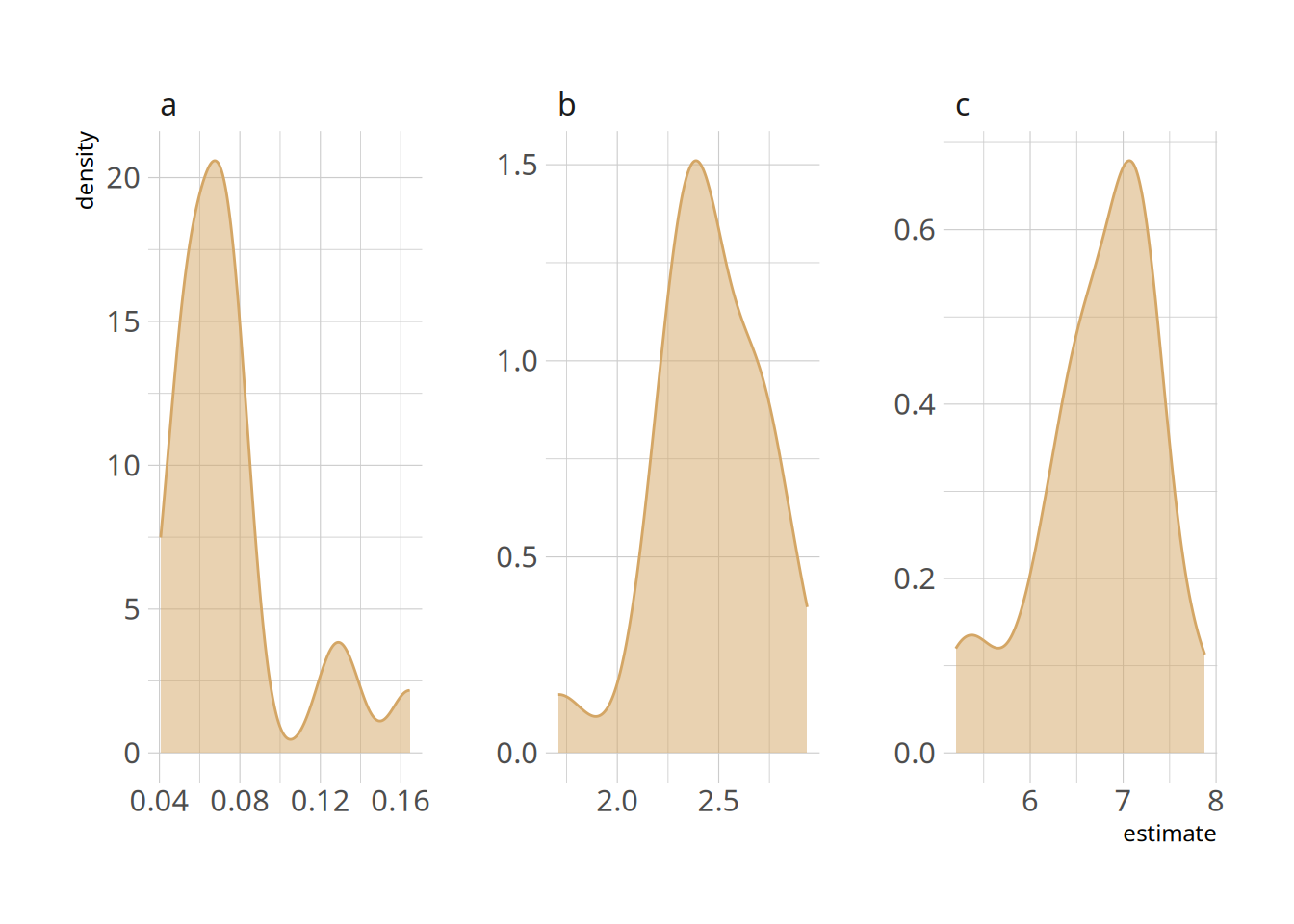

And let's check the distribution of the outcomes.

```{r}

hill_nls_outcomes <- pfdata %>%

mutate(outpars = map(hill_nls_fit, ~broom::tidy(.x))) %>%

select(-pf, -hill_nls_fit) %>%

unnest(cols="outpars")

ggplot(hill_nls_outcomes, aes(x=estimate, colour=term, fill=term)) +

geom_density(alpha=0.5, fill=colourcodes[1], colour=colourcodes[1]) +

facet_wrap(~term, scales = "free")

hill_nls_outcomes_summary <- hill_nls_outcomes %>%

group_by(term) %>%

summarise(mean = mean(estimate),

median = median(estimate),

sd = sd(estimate)) %>%

ungroup()

knitr::kable(hill_nls_outcomes_summary, digits = 3)

```

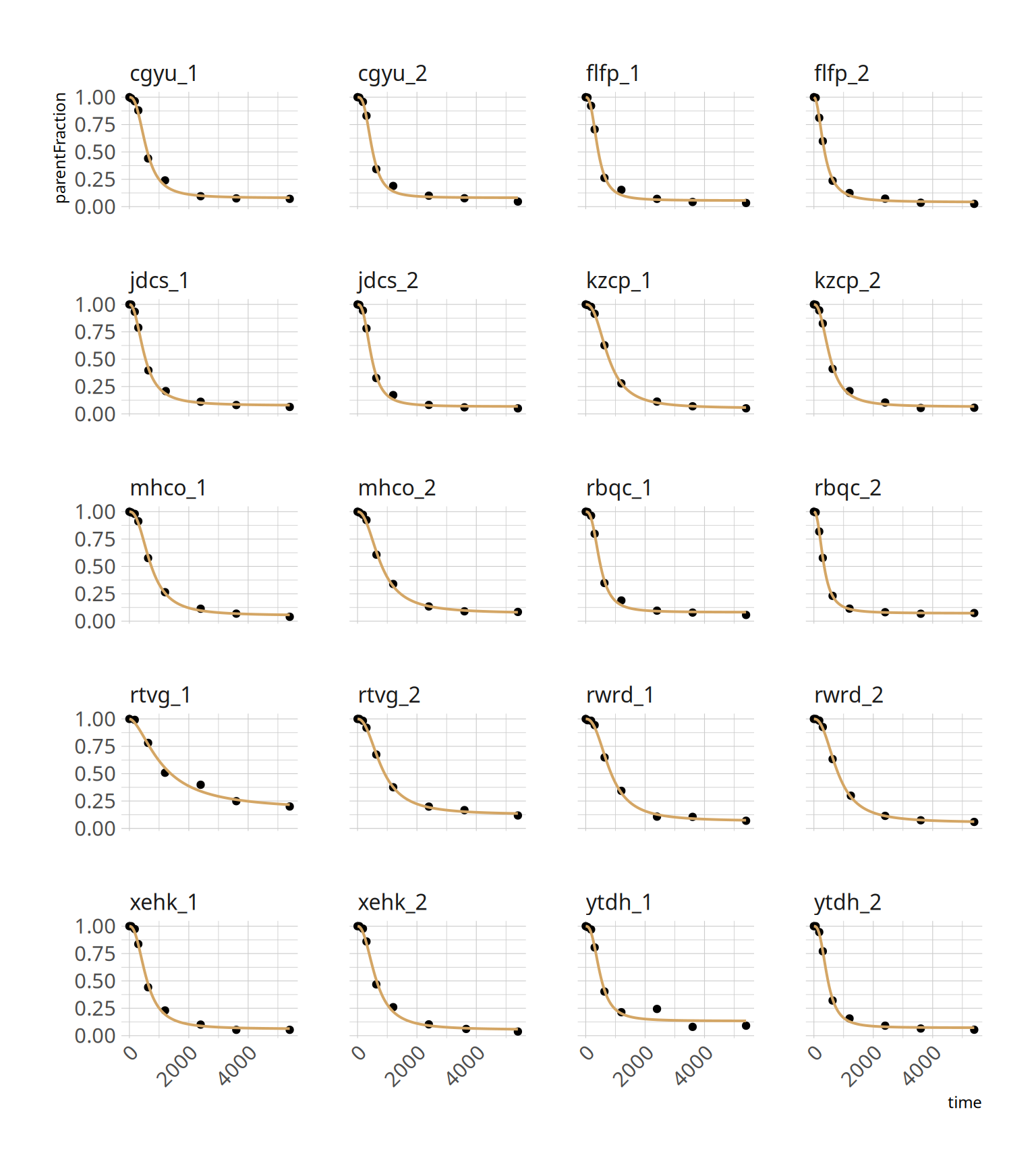

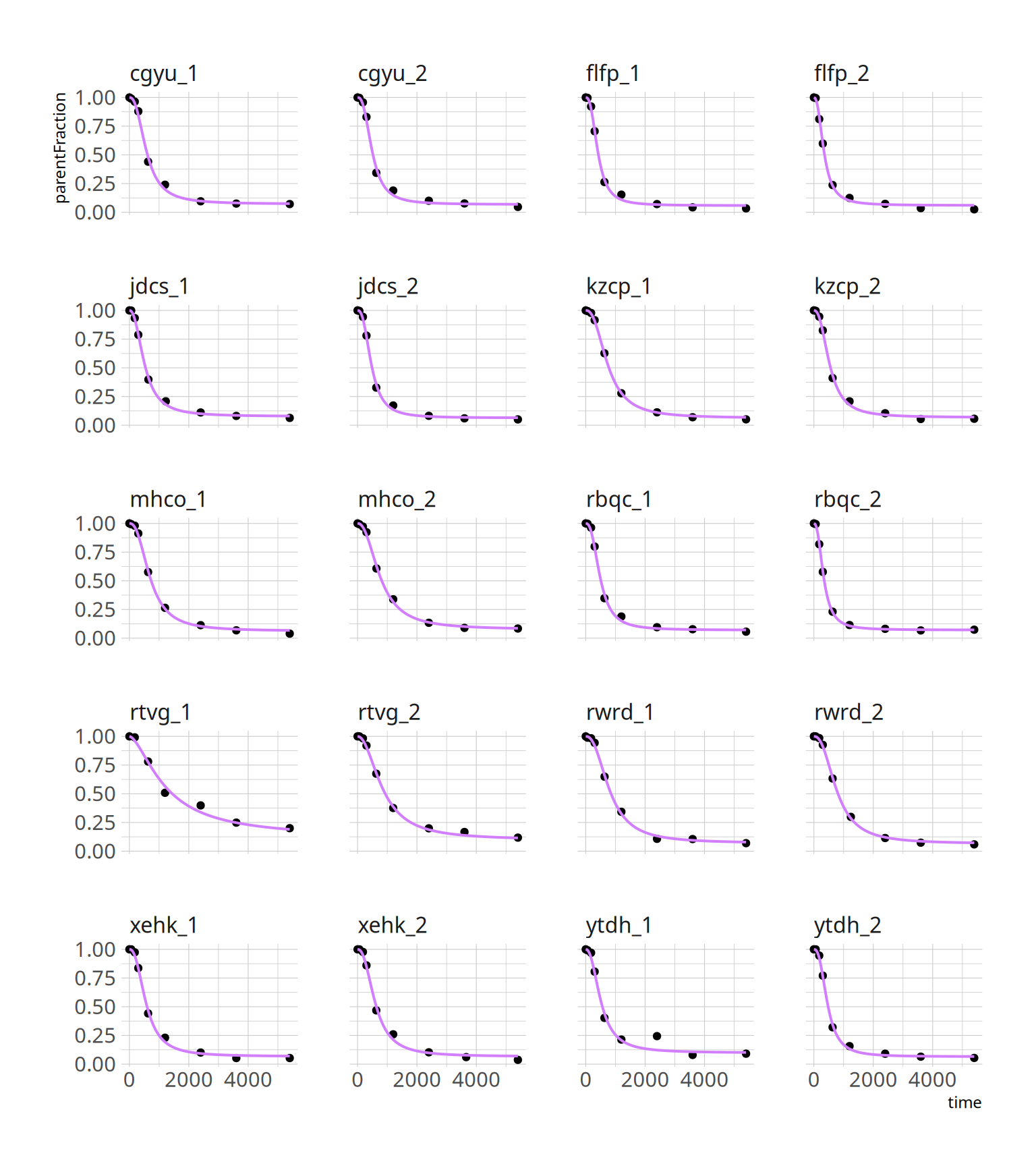

### Fits

And we can check the fits

```{r, fig.height=9, fig.width=8}

hill_nls_plots <- pfdata %>%

select(PET, pf) %>%

unnest(pf)

hill_predtimes <- tidyr::crossing(PET=pfdata$PET,

time=seq(min(hill_nls_plots$time),

max(hill_nls_plots$time),

length.out=128))

hill_nlspreds <- hill_predtimes %>%

group_by(PET) %>%

nest(preds = time) %>%

left_join(select(pfdata, PET, hill_nls_fit)) %>%

mutate(preds = map2(preds, hill_nls_fit, ~broom::augment(.y, newdata=.x))) %>%

select(-hill_nls_fit) %>%

ungroup() %>%

unnest(cols=preds)

ggplot(hill_nls_plots, aes(x=time, y=parentFraction)) +

geom_point() +

geom_line(data=hill_nlspreds, aes(y=.fitted), colour=colourcodes[1], size=0.7) +

facet_wrap(~PET, ncol=4) +

theme(axis.text.x = element_text(angle = 45, hjust = 1))

```

## NLS Summary

What we have done is to fit each curve, separately. The fits look pretty good. It's worth noting that the second-to-last measurement, *ytdh_1*, has a pretty bad data point around the middle. This pulls the fitted curve towards it, and it overshoots the last two points. This is quite annoying, and not to easy to fix. We could choose to remove the point. Or just leave it and accept some degree of error.

Doing our modelling in this way, so-called fixed effects regression, is, in the words of Richard McElreath, equivalent to giving our model anterograde amnesia. The curves are all fairly similar, however instead of learning anything about what reasonable values of the parameters are following fitting of each curve, we instead completely wipe the memory of our model before it fits each new curve. So, this is why we would hope that a multilevel approach might do a better job.

# Fitting using frequentist multilevel modelling (nlme)

Here, we use a multilevel modelling approach, but still using maximum likelihood. I'll use the *nlme* package here. While this functionality does exist in *lme4*, the nonlinear syntax was always a bit unintuitive for me. What we are doing is telling the model that each of the parameters is sampled from a normal distribution across individuals. In this way, the model can pull unlikely parameter values back towards the mean of the distribution. In addition, the correlations between the parameters are estimated, which allows the model to learn about likely values of each parameter based on the values of the other parameters, and constrain the selection of unlikely values in this way too.

Just a note: for simplicity's sake, I will model all measurements as being derived from a common normal distribution, i.e. nested within measurements. However, of the 20 measurements, these come from 10 individuals, with 2 measurements within each individual. We could have fit this instead, but this is less common, and the point of this exercise is to go through the syntax.

## Fitting the model to everyone

So, now we fit everything at once. Let's first prepare the data in a long, unnested form.

```{r}

pf_modeldata <- pfdata %>%

select(PET:pf) %>%

unnest(cols="pf")

head(pf_modeldata, n = 12)

```

Now let's fit the model!

```{r}

hill_nlme_fit <- nlme(parentFraction ~ hillfunc(time, a, b, c),

data = pf_modeldata,

fixed=a + b + c ~ 1,

random = a + b + c ~ 1,

groups = ~ PET,

start = hill_nls_outcomes_summary$mean,

verbose = F)

summary(hill_nlme_fit)

```

And let's check out the parameters

```{r}

nlme_coef = as_tibble(coef(hill_nlme_fit), rownames = 'PET')

nlme_coef

```

### Fits

And we can check the fits

```{r, fig.height=9, fig.width=8}

hill_nlmepreds <- hill_predtimes %>%

mutate(.fitted=predict(hill_nlme_fit, newdata=hill_predtimes))

ggplot(pf_modeldata, aes(x=time, y=parentFraction)) +

geom_point() +

geom_line(data=hill_nlmepreds, aes(y=.fitted), colour=colourcodes[2], size=0.7) +

facet_wrap(~PET, ncol=4)

```

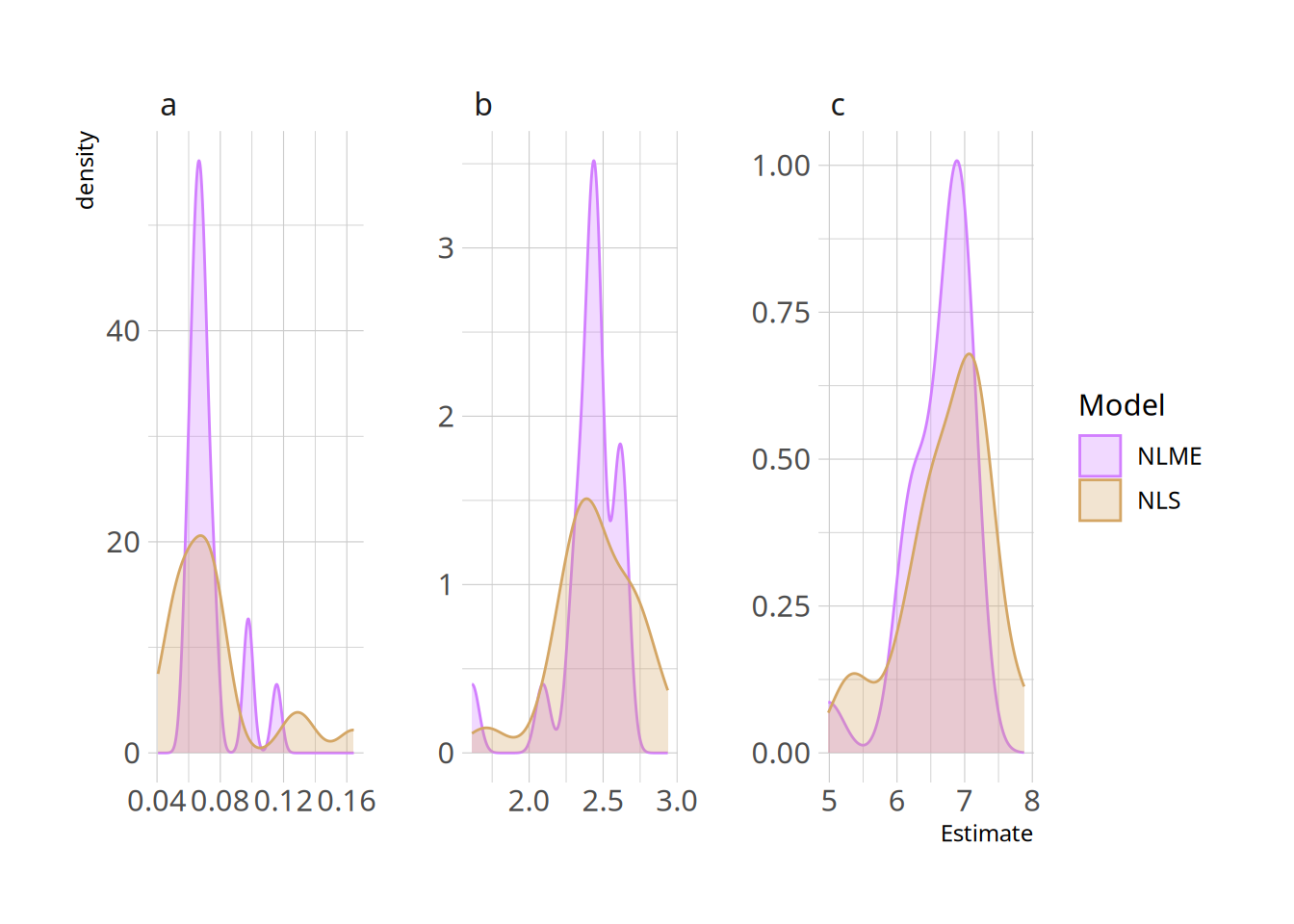

And we can compare them to the NLS outcomes

```{r}

nlme_coef_tidy <- nlme_coef %>%

gather(Parameter, Estimate, -PET) %>%

mutate(Model = "NLME")

nls_coef_tidy <- hill_nls_outcomes %>%

select(PET, Parameter=term, Estimate=estimate) %>%

mutate(Model = "NLS")

nls_nlme_comparison <- full_join(nls_coef_tidy, nlme_coef_tidy)

ggplot(nls_nlme_comparison, aes(x=Estimate, colour=Model, fill=Model)) +

geom_density(alpha=0.3) +

scale_colour_manual(values=colourpal) +

scale_fill_manual(values=colourpal) +

facet_wrap(~Parameter, scales="free")

```

We can see that the NLME density is much peakier, showing the shrinkage: values are being pulled towards the centre based on the lessons learned by fitting the whole sample together.

## NLME Summary

We can see the effect of the shrinkage immediately in the second-to-last curve, which was problematic before, as well as in the distributions of the parameters. So that's nice! But now let's go deeper.

# Bayesian multilevel modelling using MCMC with brms

So, now we are going to model the same curves, but using Markov Chain Monte Carlo (MCMC) instead of maximum likelihood. This requires that we set priors on our parameters (which gives us the opportunity to include all the things we know about our parameters *a priori*). Then the algorithm traverses parameter space, spending time in any particular region in proportion to its relative posterior probability. This allows us to build up a posterior probability distribution over each parameter, and to make inferences using the probabilities themselves.

We use MCMC with STAN under the hood, and *brms* gives us a convenient interface, which writes all the STAN code for us and makes our lives easier - at least when the model is simple enough to be written using *brms* syntax.

Because the purpose of this exercise here is mostly to grok the syntax, I've just used priors which are based on the same dataset. This is not what you should be doing in practice.

## Modelling a single curve

Let's first just try a single curve for simplicity's sake.

```{r}

hillprior <- c(

set_prior("normal(0.2, 0.1)", nlpar = "a", lb=0, ub=1),

set_prior("normal(2, 1)", nlpar = "b", lb=1),

set_prior("normal(7, 3)", nlpar = "c", lb=0),

set_prior("normal(0.05, 0.2)", class="sigma"))

hill_bayes_fit_formula <- bf(parentFraction ~ 1 - ( ( (1-a) * time^b) /

( 10^c + (time)^b ) ),

# Nonlinear variables

a + b + c ~ 1,

# Nonlinear fit

nl = TRUE)

hill_bayes_fit <- brm(

hill_bayes_fit_formula,

family=gaussian(),

data = pfdat,

prior = hillprior )

```

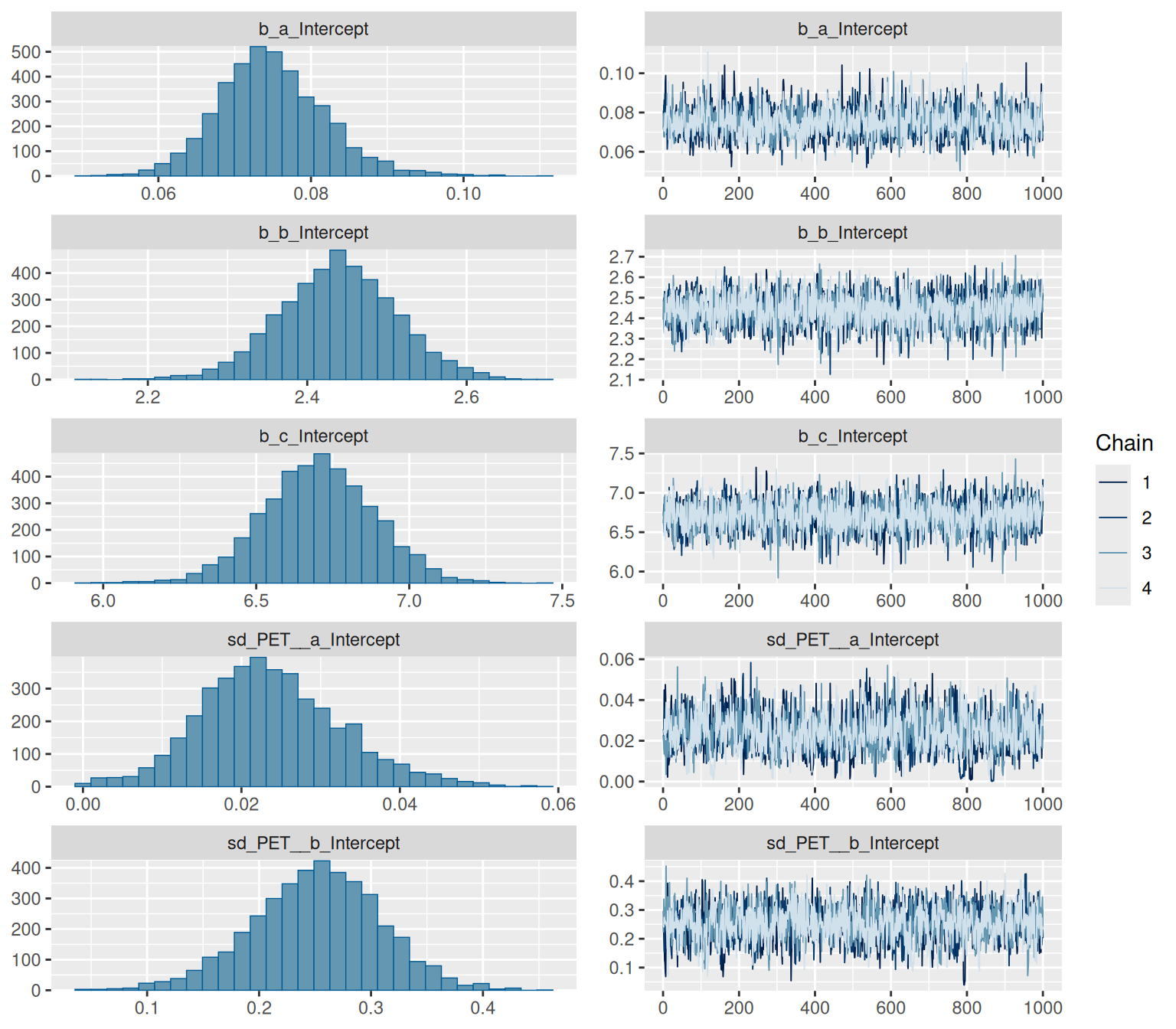

We have some divergent transitions. This would usually be something to look into, but this guide is about the syntax, so we'll ignore that for today.

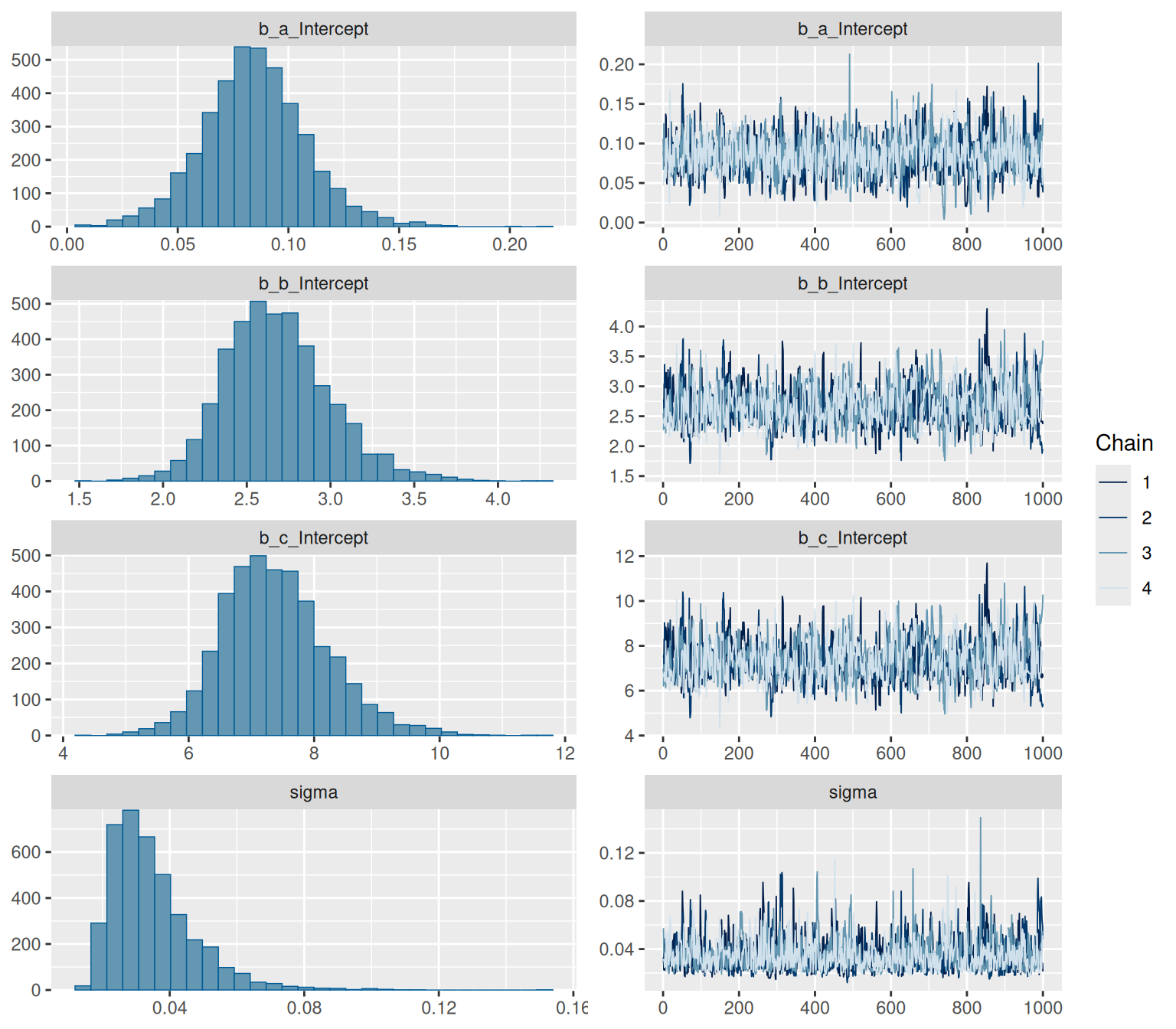

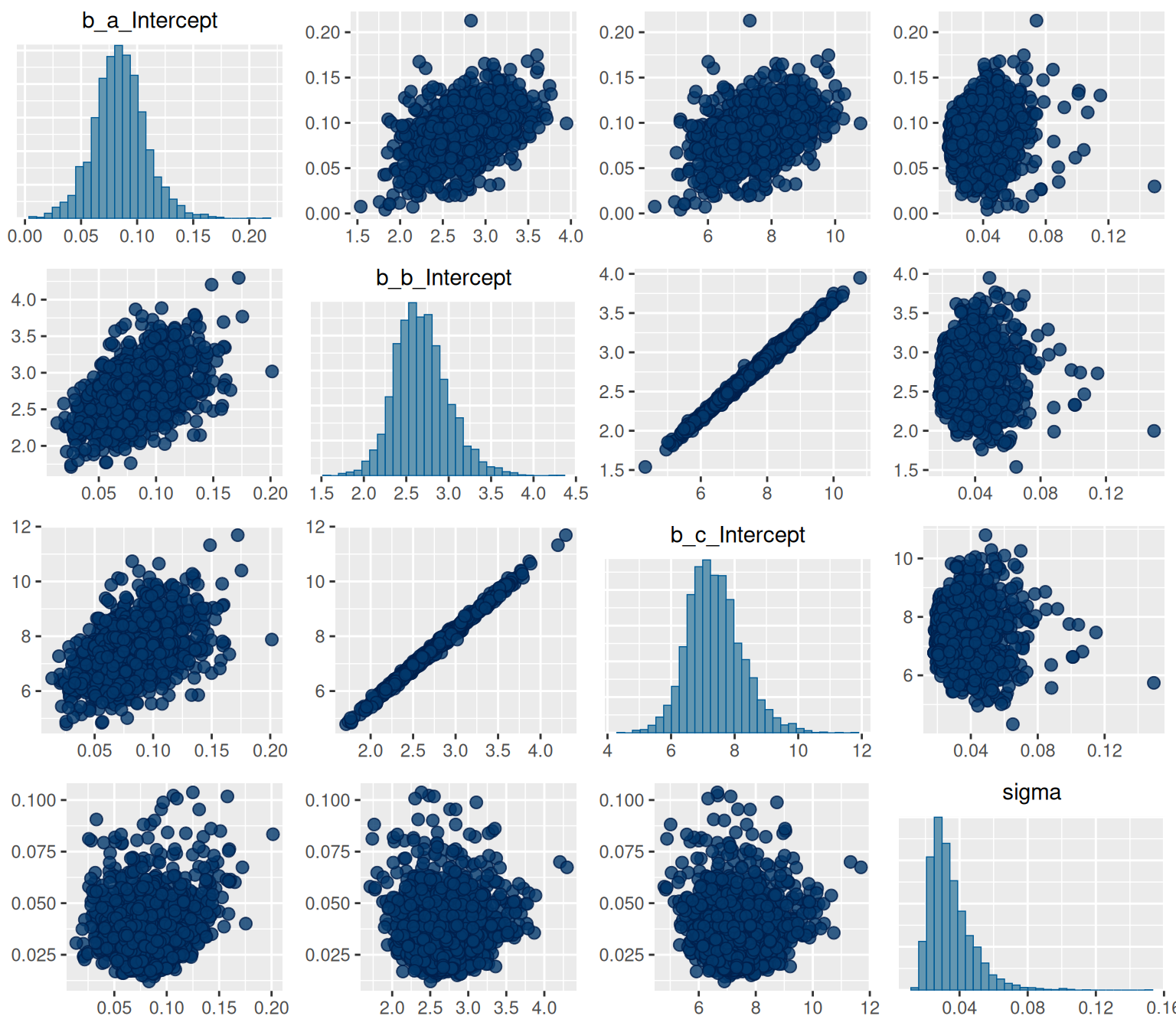

Let's check the fit!

```{r, fig.height=7, fig.width=8}

summary(hill_bayes_fit)

plot(hill_bayes_fit)

pairs(hill_bayes_fit)

```

Seems to have done a pretty ok job! We can see that there's a high degree of correlation between b and c.

```{r}

predtimes <- unique(hill_predtimes$time)

hill_bayes_fitted <- fitted(hill_bayes_fit,

newdata=list(time = predtimes)) %>%

as_tibble()

hill_bayes_pred <- predict(hill_bayes_fit,

newdata=list(time = predtimes)) %>%

as_tibble()

```

```{r}

hill_bayes_ribbons <- tibble(

time = predtimes,

parentFraction=hill_bayes_fitted$Estimate,

Estimate = hill_bayes_fitted$Estimate,

pred_lower = hill_bayes_pred$Q2.5,

pred_upper = hill_bayes_pred$Q97.5,

fitted_lower = hill_bayes_fitted$Q2.5,

fitted_upper = hill_bayes_fitted$Q97.5)

ggplot(pfdat, aes(x=time, y=parentFraction)) +

geom_point(size=3) +

geom_ribbon(data=hill_bayes_ribbons, aes(ymin=pred_lower, ymax=pred_upper),

alpha=0.2, fill=colourcodes[3]) +

geom_ribbon(data=hill_bayes_ribbons, aes(ymin=fitted_lower, ymax=fitted_upper),

alpha=0.5, fill=colourcodes[3]) +

geom_line(data=hill_bayes_ribbons, aes(y=Estimate), colour=colourcodes[3],

size=1)

```

And the fit looks ok too. Here we see the fit, the 95% credible interval (the uncertainty our model has about the line of best fit), and the 95% prediction interval (the interval for our model believes that new data points will fall within with a 95% probability).

Let's also compare the outcomes between the NLS and the MCMC fit

```{r}

summary(hill_nls_fit)

summary(hill_bayes_fit)

```

## Modelling all the curves

Here, I'll also define the function as a STAN function, just to demonstrate the syntax. Don't forget those semicolons!

```{r}

hillstan <- "

real hill_stan(real Time, real a, real b, real c) {

real pred;

pred = 1 - ( ( (1-a) * Time^b) / ( 10^c + (Time)^b ) );

return(pred);

}

"

get_prior(bf(parentFraction ~ hill_stan(time, a, b, c),

# Nonlinear variables

a + b + c ~ 1 + (1|k|PET),

# Nonlinear fit

nl = TRUE), data=pf_modeldata)

hillprior_multilevel <- c(

set_prior("normal(0.2, 0.1)", nlpar = "a", lb=0, ub=1),

set_prior("normal(2, 1)", nlpar = "b", lb=1),

set_prior("normal(7, 3)", nlpar = "c", lb=0),

set_prior("normal(0.05, 0.02)", class="sigma"),

set_prior("normal(0.03, 0.02)", class="sd", nlpar="a"),

set_prior("normal(0.3, 0.1)", class="sd", nlpar="b"),

set_prior("normal(0.7, 0.2)", class="sd", nlpar="c"),

set_prior("lkj(2)", class = "cor"))

hill_multilevelbayes_formula <- bf(parentFraction ~ hill_stan(time, a, b, c),

# Nonlinear variables

a + b + c ~ 1 + (1|k|PET),

# Nonlinear fit

nl = TRUE)

```

Let's check the STAN code before fitting it

```{r}

make_stancode(hill_multilevelbayes_formula,

family=gaussian(),

data = pf_modeldata,

prior = hillprior_multilevel,

stanvars = stanvar(scode = hillstan,

block="functions"))

```

Looks good! Happy not to have to write this all ourselves! Now let's fit it.

```{r}

hill_multilevelbayes_fit <- brm(

hill_multilevelbayes_formula,

family=gaussian(),

data = pf_modeldata,

prior = hillprior_multilevel,

stanvars = stanvar(scode = hillstan,

block="functions"),

cores = 4)

```

And check it.

```{r}

summary(hill_multilevelbayes_fit)

```

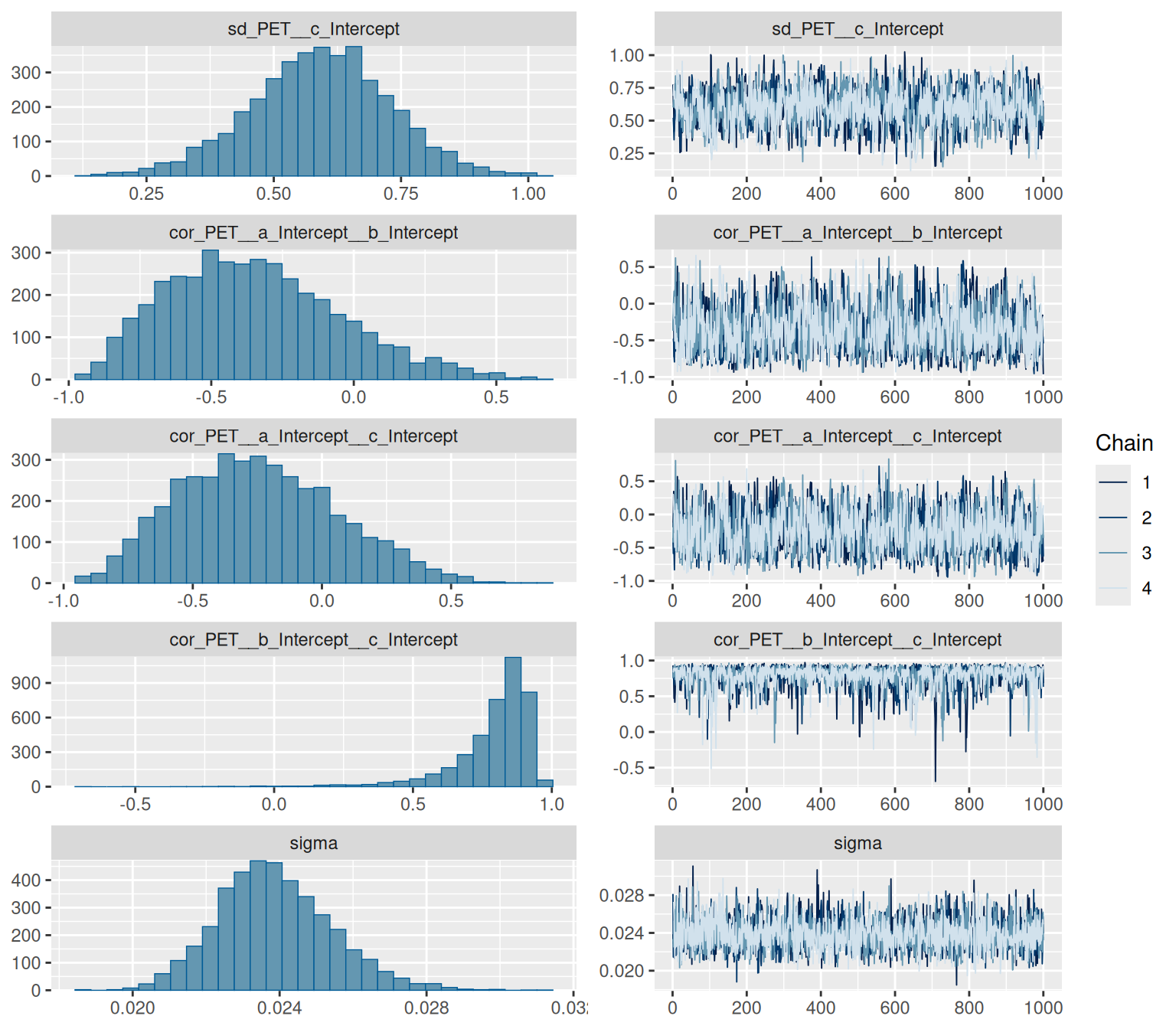

We have some divergent transitions. Again, this would usually be something to look into, but this guide is about figuring out the syntax, so we'll ignore that for today.

```{r, fig.height=7, fig.width=8}

plot(hill_multilevelbayes_fit, ask = FALSE)

```

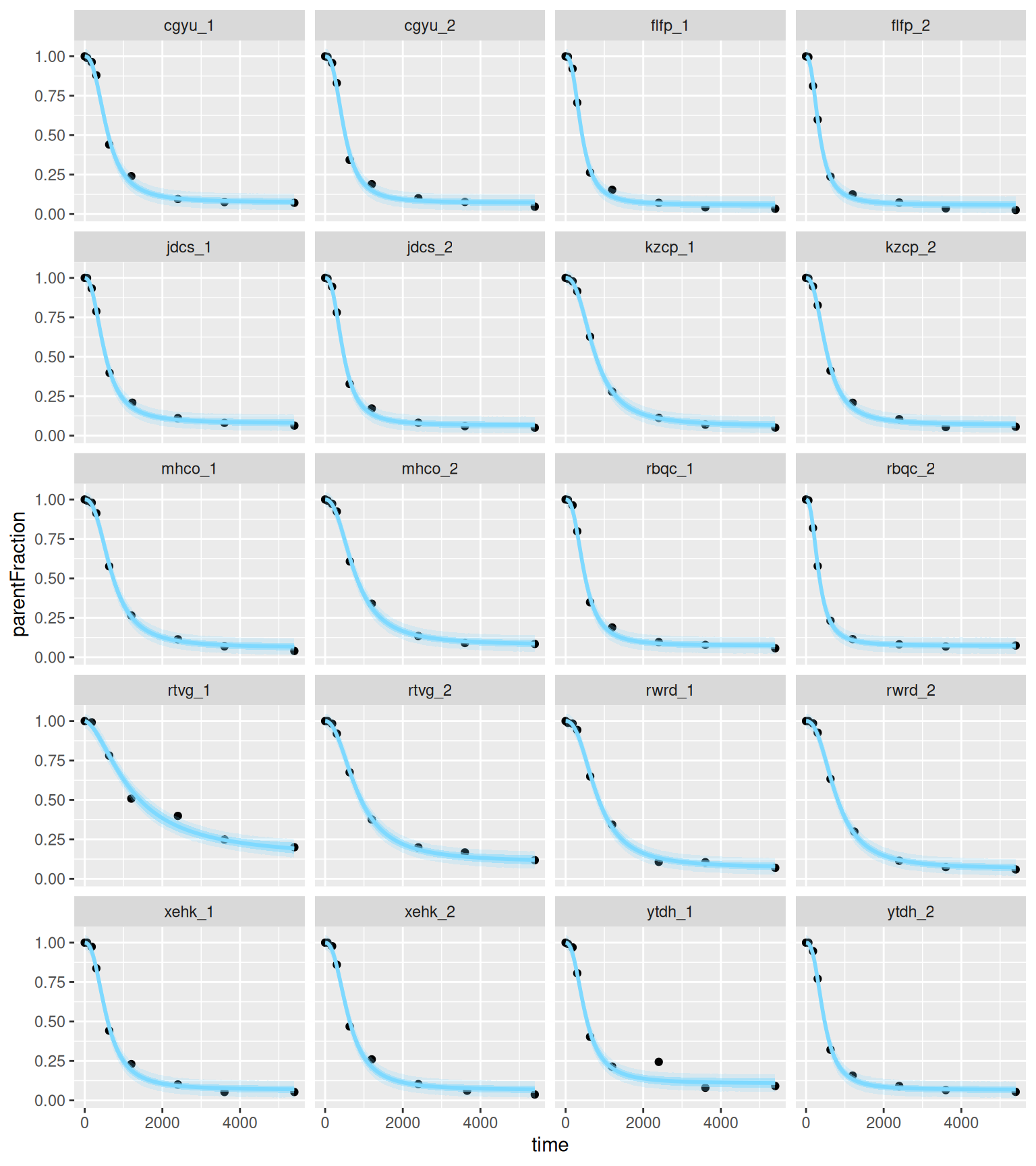

### Fits

And let's see the individual fits. Because we've defined a function, we first need to expose it, using the expose_functions command.

```{r, fig.height=9, fig.width=8}

expose_functions(hill_multilevelbayes_fit, vectorize = TRUE)

hill_mlbayes_pred <- predict(hill_multilevelbayes_fit,

newdata=hill_predtimes) %>%

as_tibble()

hill_mlbayes_fitted <- fitted(hill_multilevelbayes_fit,

newdata=hill_predtimes) %>%

as_tibble()

hill_mlbayes_ribbons <- tibble(

PET = hill_predtimes$PET,

time = hill_predtimes$time,

parentFraction = hill_mlbayes_fitted$Estimate,

pred_lower = hill_mlbayes_pred$Q2.5,

pred_upper = hill_mlbayes_pred$Q97.5,

fitted_lower = hill_mlbayes_fitted$Q2.5,

fitted_upper = hill_mlbayes_fitted$Q97.5)

ggplot(pf_modeldata, aes(x=time, y=parentFraction)) +

geom_point() +

geom_line(data=hill_mlbayes_ribbons, aes(y=parentFraction), colour=colourcodes[3],

size=1) +

geom_ribbon(data=hill_mlbayes_ribbons, alpha=0.2, aes(ymin=pred_lower,

ymax=pred_upper),

fill=colourcodes[3]) +

geom_ribbon(data=hill_mlbayes_ribbons, alpha=0.5, aes(ymin=fitted_lower,

ymax=fitted_upper),

fill=colourcodes[3]) +

facet_wrap(~PET, ncol=4)

```

This is really illuminating to compare with the above single-subject plots: the inner blue ribbons contain the 95% credible interval, and the outer ones contain the 95% prediction intervals, and we can see that they're pretty tight compared to the fitting of the single curve above. We also see that the ugly data point in the second to last curve falls well outside the 95% predicted interval, suggesting that the model believes that it was an unlikely observation.

### The Average Curve

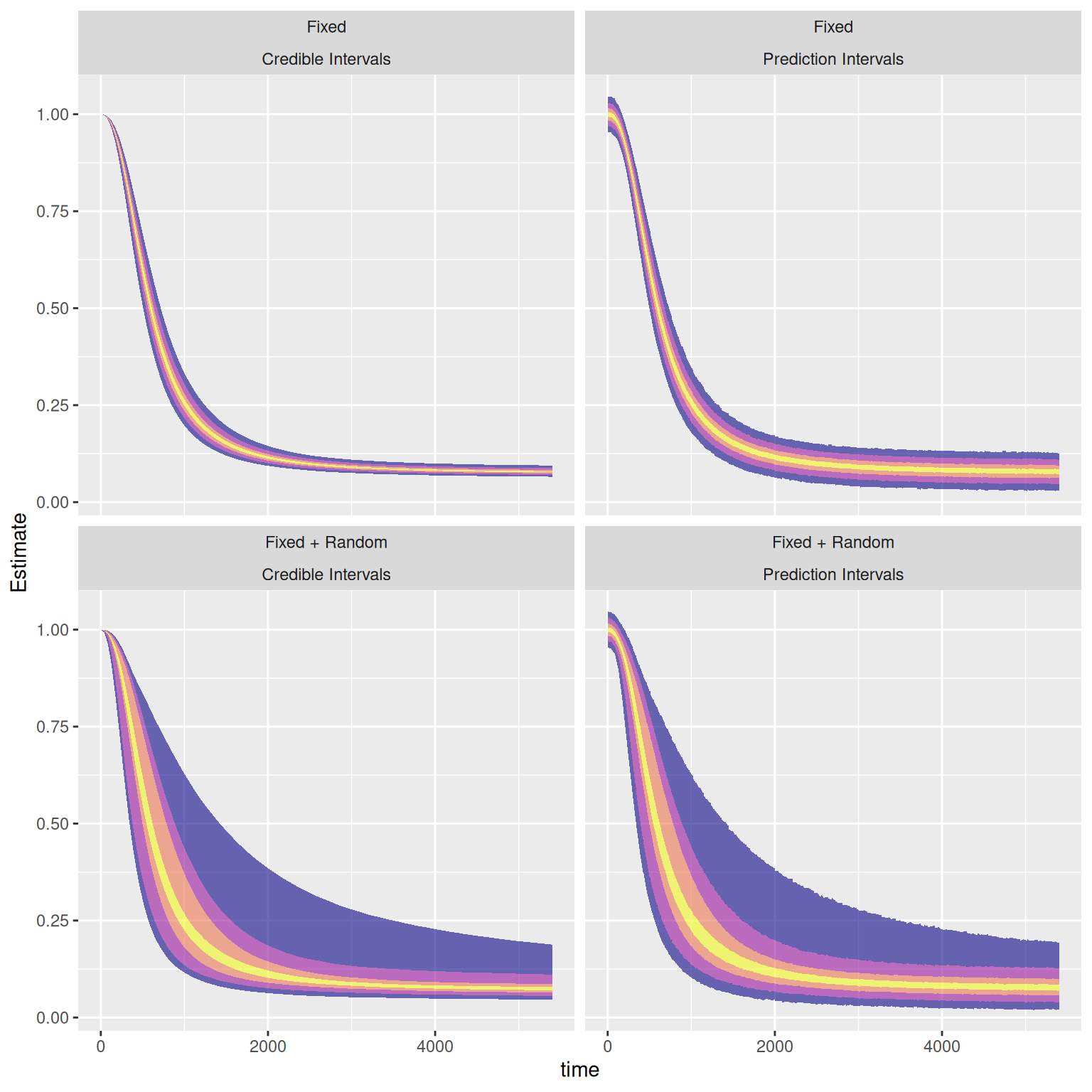

So, we've looked a little bit at the credible intervals, and the prediction intervals. I thought I'd take the opportunity to take a slightly closer look at the the average curve. Here, based on our model, we can examine four kinds of uncertainty around the average curve:

* Our model's uncertainty around our estimate of the average curve at each given time point (fixed effects credible interval)

* The values our model would expect to observe from the average individual (based on measurement error etc.) (fixed effects prediction interval)

* Our model's uncertainty around an estimate of the curve for a new individual, not yet examined, based on the remainder of the sample (fixed + random effects credible interval)

* The values our model would expect to observe from a new individual (based on measurement error + their not being "the average individual" etc.) (fixed + random effects prediction interval)

Let's give it a go.

```{r, fig.height=5, fig.width=10}

probnames <- c(20, 50, 80, 95)

probs <- c(40, 60, 25, 75, 10, 90, 2.5, 97.5)/100

probtitles <- probs[order(probs)]*100

probtitles <- paste("Q", probtitles, sep="")

hill_mlbayes_avgpred <- predict(hill_multilevelbayes_fit,

newdata=list(time=hill_predtimes$time),

re_formula = NA,

probs=probs) %>%

as_tibble() %>%

mutate(Curve = "Prediction Intervals",

Effects = "Fixed")

hill_mlbayes_avgfitted <- fitted(hill_multilevelbayes_fit,

newdata=list(time=hill_predtimes$time),

re_formula = NA,

probs=probs) %>%

as_tibble() %>%

mutate(Curve = "Credible Intervals",

Effects = "Fixed")

hill_mlbayes_avgfitted_ns <- fitted(hill_multilevelbayes_fit,

newdata=list(time=hill_predtimes$time,

PET=rep("new", nrow(hill_predtimes))),

probs=probs, allow_new_levels=TRUE) %>%

as_tibble() %>%

mutate(Curve = "Credible Intervals",

Effects = "Fixed + Random")

hill_mlbayes_avgpred_ns <- predict(hill_multilevelbayes_fit,

newdata=list(time=hill_predtimes$time,

PET=rep("new", nrow(hill_predtimes))),

probs=probs, allow_new_levels=TRUE) %>%

as_tibble() %>%

mutate(Curve = "Prediction Intervals",

Effects = "Fixed + Random")

hill_mlbayes_aribbons <- bind_rows(hill_mlbayes_avgpred,

hill_mlbayes_avgfitted,

hill_mlbayes_avgpred_ns,

hill_mlbayes_avgfitted_ns) %>%

mutate(time = rep(hill_predtimes$time, 4))

```

```{r, fig.height=8, fig.width=8}

avg_pal <- viridis::plasma(n=4)

names(avg_pal) <- paste(probnames, "%", sep="")

ggplot(hill_mlbayes_aribbons, aes(x=time, y=Estimate)) +

geom_ribbon(aes_string(ymin=probtitles[1], ymax=probtitles[2]),

fill=avg_pal[1], alpha=0.6) +

geom_ribbon(aes_string(ymin=probtitles[7], ymax=probtitles[8]),

fill=avg_pal[1], alpha=0.6) +

geom_ribbon(aes_string(ymin=probtitles[2], ymax=probtitles[3]),

fill=avg_pal[2], alpha=0.6) +

geom_ribbon(aes_string(ymin=probtitles[6], ymax=probtitles[7]),

fill=avg_pal[2], alpha=0.6) +

geom_ribbon(aes_string(ymin=probtitles[3], ymax=probtitles[4]),

fill=avg_pal[3], alpha=0.6) +

geom_ribbon(aes_string(ymin=probtitles[5], ymax=probtitles[6]),

fill=avg_pal[3], alpha=0.6) +

geom_ribbon(aes_string(ymin=probtitles[4], ymax=probtitles[5]),

fill=avg_pal[4], alpha=0.6) +

facet_wrap(Effects~Curve, ncol=2)

```

We can get quite a lot of information from these plots! We can see that we are quite certain of the average curve (top left), and that the amount of error around our curve, according to our model, is quite small (top right). We can also see that we could not hope to be able to use an average curve as a substitute for measuring the individual curves, because there is a lot of inter-individual variability (bottom left). The true inter-individual variability (bottom left) comprises the vast majority of the variance of the measured values, and the the additional measurement error constitutes a very small extra amount of variance (bottom right). We could also use the fixed+random prediction interval as a means by which to flag unlikely values during measurement, in case they have been entered incorrectly, or the machine is malfunctioning etc.

# Comparing the Parameters and Fits

## Parameters

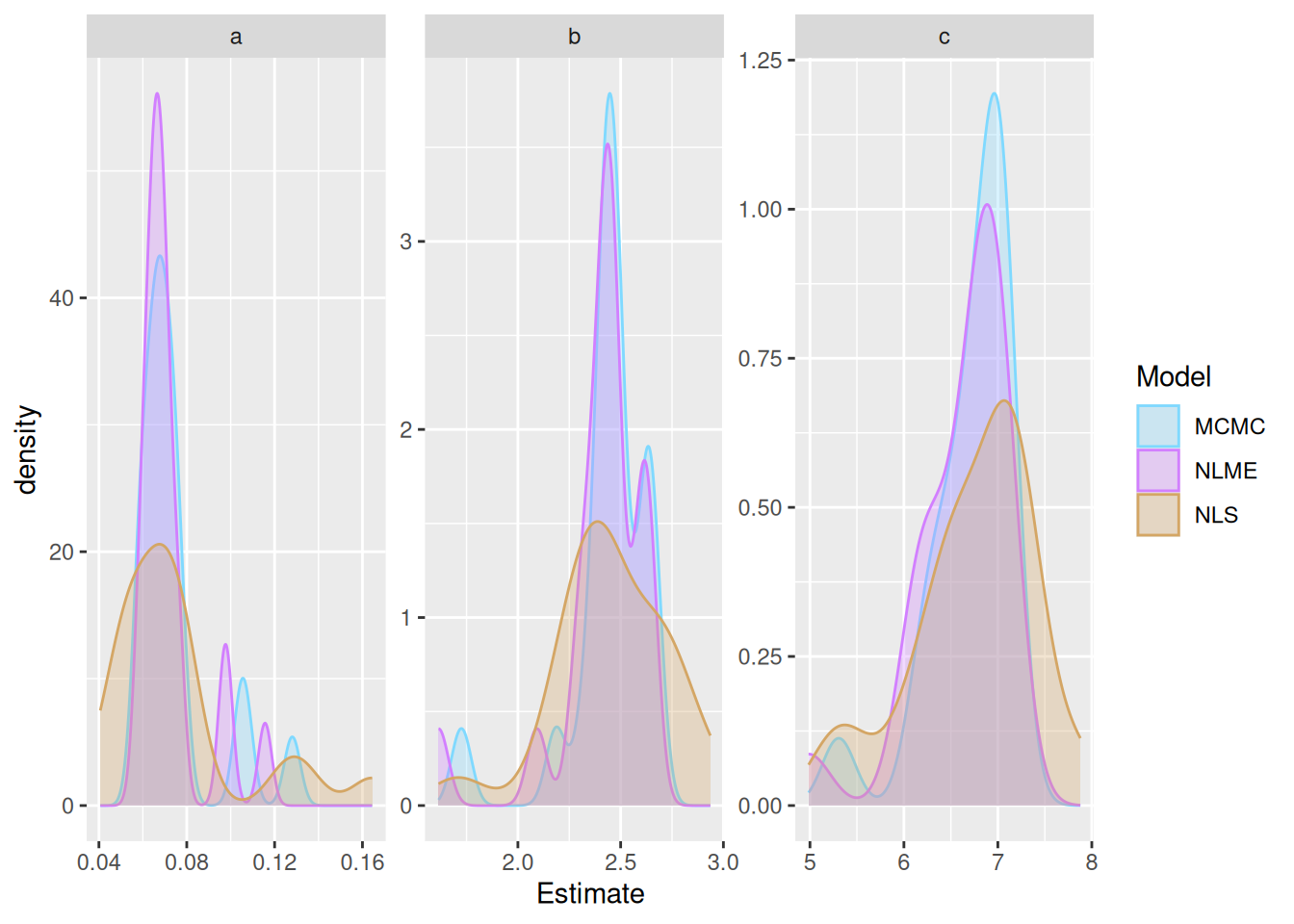

Here we can compare the parameters from each of the methods to examine their shrinkage

```{r}

hill_mlbayes_arrays <- coef(hill_multilevelbayes_fit)

hill_mlbayes_outcomes <- rbind(a=hill_mlbayes_arrays$PET[, , 1],

b=hill_mlbayes_arrays$PET[, , 2],

c=hill_mlbayes_arrays$PET[, , 3]) %>%

as_tibble(rownames='PET') %>%

mutate(Model = 'MCMC',

Parameter = rep(c('a', 'b', 'c'), each=nrow(pfdata))) %>%

select(PET, Parameter, Estimate, Model)

model_outcome_comparison <- bind_rows(nls_nlme_comparison, hill_mlbayes_outcomes)

ggplot(model_outcome_comparison, aes(x=Estimate, colour=Model, fill=Model)) +

geom_density(alpha=0.3) +

scale_fill_manual(values=colourpal) +

scale_colour_manual(values=colourpal) +

facet_wrap(~Parameter, scales="free")

```

Here we can see the shrinkage of the parameters towards the respective means for the NLME and MCMC estimates.

## Fits

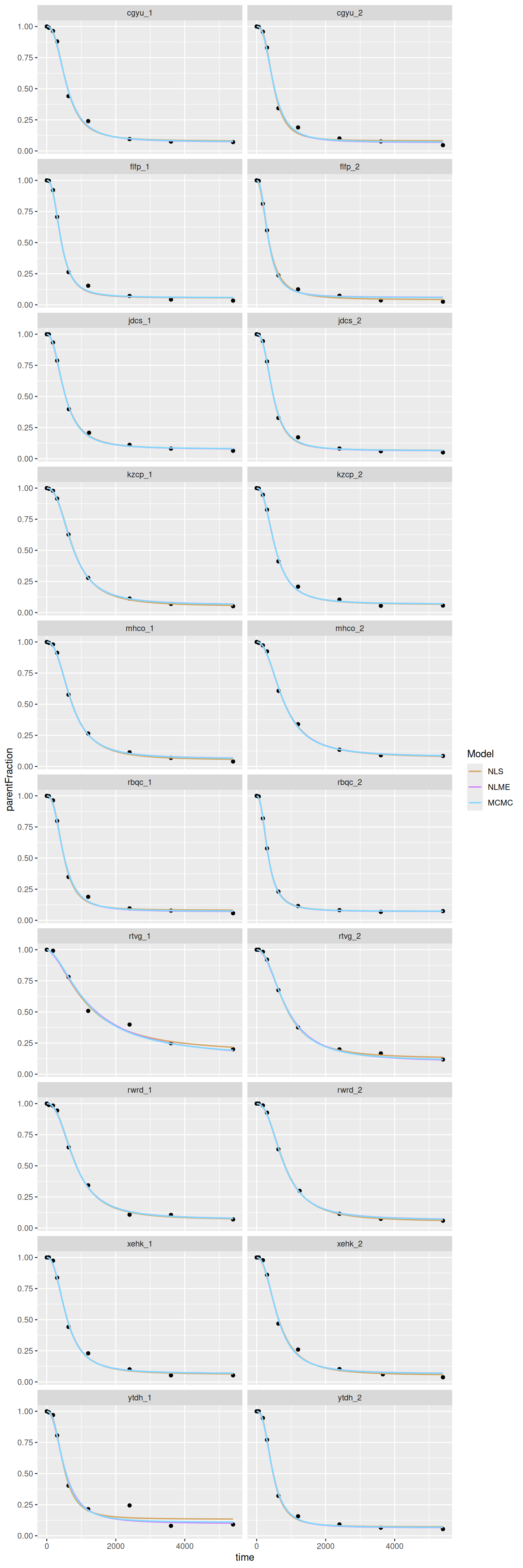

Let's also compare fits. I'll do it with larger panels so we can look a bit more closely to see any differences.

```{r, fig.height=24, fig.width=8}

preddata <- tibble(

PET = hill_nlspreds$PET,

time = hill_nlspreds$time,

NLS = hill_nlspreds$.fitted,

NLME = hill_nlmepreds$.fitted,

MCMC = hill_mlbayes_fitted$Estimate

) %>%

gather(Model, parentFraction, -PET, -time) %>%

mutate(Model = fct_inorder(Model)) %>%

arrange(PET, time, Model)

ggplot(pf_modeldata, aes(x=time, y=parentFraction)) +

geom_point() +

geom_line(data=preddata, aes(y=parentFraction, colour=Model),

size=0.7) +

facet_wrap(~PET, ncol=2) +

scale_colour_manual(values=colourpal)

```

The curves are pretty close. We see that the second-to-last has the biggest deviations between methods, as we'd expect.

# Conclusions

Here I've gone through how to perform nonlinear modelling using nonlinear least squares (NLS, using the `minpack.lm` and `nls.multstart` packages), multilevel maximum likelihood estimation (using the `nlme` package), and multilevel Bayesian modelling (using `brms`, which makes use of STAN). Of course it's easiest to use the former, but we get the least information back, and are the most vulnerable to measurement error or inaccuracies in the data. Multilevel modelling allows us to take advantage of the similarities between individuals to derive better estimates. Using Bayesian methods, we can incorporate the knowledge that we already have based on previous experience, or previous studies, and gives us more information, and more flexibility. The Bayesian models require more thought though, and takes more computational resources.

I hope this has been helpful! I know it certainly has been for me, to be able to flick back to to copy the syntax and modify from when running these types of models in new scenarios.

<!-- # Assessing accuracy -->

<!-- In this comparison, we did not alert the models to the fact that each individual was measured twice. The models simply fit each curve as if it came from a new individual. What we can do, then, to assess the accuracy of each method, is to assume that each individual should have similar outcome parameters, and similar predicted values for each of their two measurements, and assess the degree to which the model was similar across the two PET measurements. -->

<!-- ## Outcome Parameters -->

<!-- ```{r} -->

<!-- outcome_compare <- model_outcome_comparison %>% -->

<!-- separate(PET, c("subject", "session"), sep="_", convert=T) -->

<!-- outcome_compare_1 <- filter(outcome_compare, session==1) %>% -->

<!-- rename(Estimate_PET1 = Estimate) %>% -->

<!-- select(-session) -->

<!-- outcome_compare_2 <- filter(outcome_compare, session==2) %>% -->

<!-- rename(Estimate_PET2 = Estimate) %>% -->

<!-- select(-session) -->

<!-- outcome_compare_12 <- full_join(outcome_compare_1, outcome_compare_2) -->

<!-- ``` -->

<!-- ```{r} -->

<!-- ggplot(outcome_compare_12, aes(x=Estimate_PET1, y=Estimate_PET2, colour=Model)) + -->

<!-- geom_point() + -->

<!-- geom_smooth(method="lm") + -->

<!-- facet_wrap(Model ~ Parameter, scales="free") -->

<!-- ``` -->

<!-- ```{r} -->

<!-- outcome_compare_r <- outcome_compare_12 %>% -->

<!-- filter(!is.na(Estimate_PET2)) %>% -->

<!-- group_by(Parameter, Model) %>% -->

<!-- summarise(R = cor(Estimate_PET1, Estimate_PET2)) -->

<!-- knitr::kable(outcome_compare_r) -->

<!-- ``` -->

<!-- Eh, this isn't very informative. Probably means that the parameters themselves are not particularly identifiable. -->

<!-- ## Predicted values -->

<!-- First I'll assign a bunch of times to check at. -->

<!-- ```{r} -->

<!-- times <- data.frame(Time=c(60, 180, 300, 630, 1200, 2400, 3600, 5400)) -->

<!-- measurements <- unique(select(pf_modeldata, subject, session)) -->

<!-- pred_compare <- crossing(times, measurements) %>% -->

<!-- mutate(PET = paste(subject, session, sep="_")) %>% -->

<!-- arrange(subject, session, Time) -->

<!-- hill_nls_preds <- pfdata %>% -->

<!-- mutate(PET=paste0(subject, '_', session)) %>% -->

<!-- select(PET, hill_nls_fit) %>% -->

<!-- mutate(preds = map(hill_nls_fit, ~predict(.x, newdata=times))) %>% -->

<!-- select(-hill_nls_fit) %>% -->

<!-- unnest() -->

<!-- nls_pred <- bind_cols(pred_compare, hill_nls_preds) %>% -->

<!-- select(-PET1, NLS=preds) -->

<!-- nlme_pred <- pred_compare %>% -->

<!-- mutate(NLME=predict(hill_nlme_fit, newdata = pred_compare)) -->

<!-- mcmc_pred <- pred_compare %>% -->

<!-- mutate(MCMC=predict(hill_multilevelbayes_fit, newdata = pred_compare)[,1]) -->

<!-- allpred <- full_join(nls_pred, nlme_pred) %>% -->

<!-- full_join(mcmc_pred) %>% -->

<!-- gather(Model, Estimate, -Time, -subject, -session, -PET) -->

<!-- allpred_1 <- filter(allpred, session==1) %>% -->

<!-- rename(Estimate_PET1 = Estimate) %>% -->

<!-- select(-PET, -session) -->

<!-- allpred_2 <- filter(allpred, session==2) %>% -->

<!-- rename(Estimate_PET2 = Estimate) %>% -->

<!-- select(-PET, -session) -->

<!-- allpred_12 <- full_join(allpred_1, allpred_2) %>% -->

<!-- filter(!is.na(Estimate_PET2)) -->

<!-- ``` -->

<!-- Now let's compare -->

<!-- ```{r, fig.width=8, fig.height=12} -->

<!-- ggplot(allpred_12, aes(x=Estimate_PET1, y=Estimate_PET2, colour=Model)) + -->

<!-- geom_point() + -->

<!-- geom_smooth(method="lm") + -->

<!-- facet_wrap(Time ~ Model, scales="free", nrow=8) -->

<!-- ``` -->

<!-- ```{r} -->

<!-- allpred_compare_r <- allpred_12 %>% -->

<!-- filter(!is.na(Estimate_PET2)) %>% -->

<!-- group_by(Time, Model) %>% -->

<!-- summarise(R = cor(Estimate_PET1, Estimate_PET2)) %>% -->

<!-- arrange(Time, Model) -->

<!-- ggplot(allpred_compare_r, aes(x=time, y=R, colour=Model, group=Model)) + -->

<!-- geom_point() + -->

<!-- geom_line() -->

<!-- ``` -->

<!-- Eh, not super informative either. -->